The Origin: Seeing Through the Noise In robotics and autonomous navigation, hardware sensors rarely provide a perfect picture of the world. Ultrasonic and sonar sensors are notoriously noisy—they bounce off angled surfaces, pick up environmental interference, and generate "ghost echoes." I wanted to build a radar system that didn't just blindly display every raw ping, but actually possessed the intelligence to understand what was a real object and what was just noise.

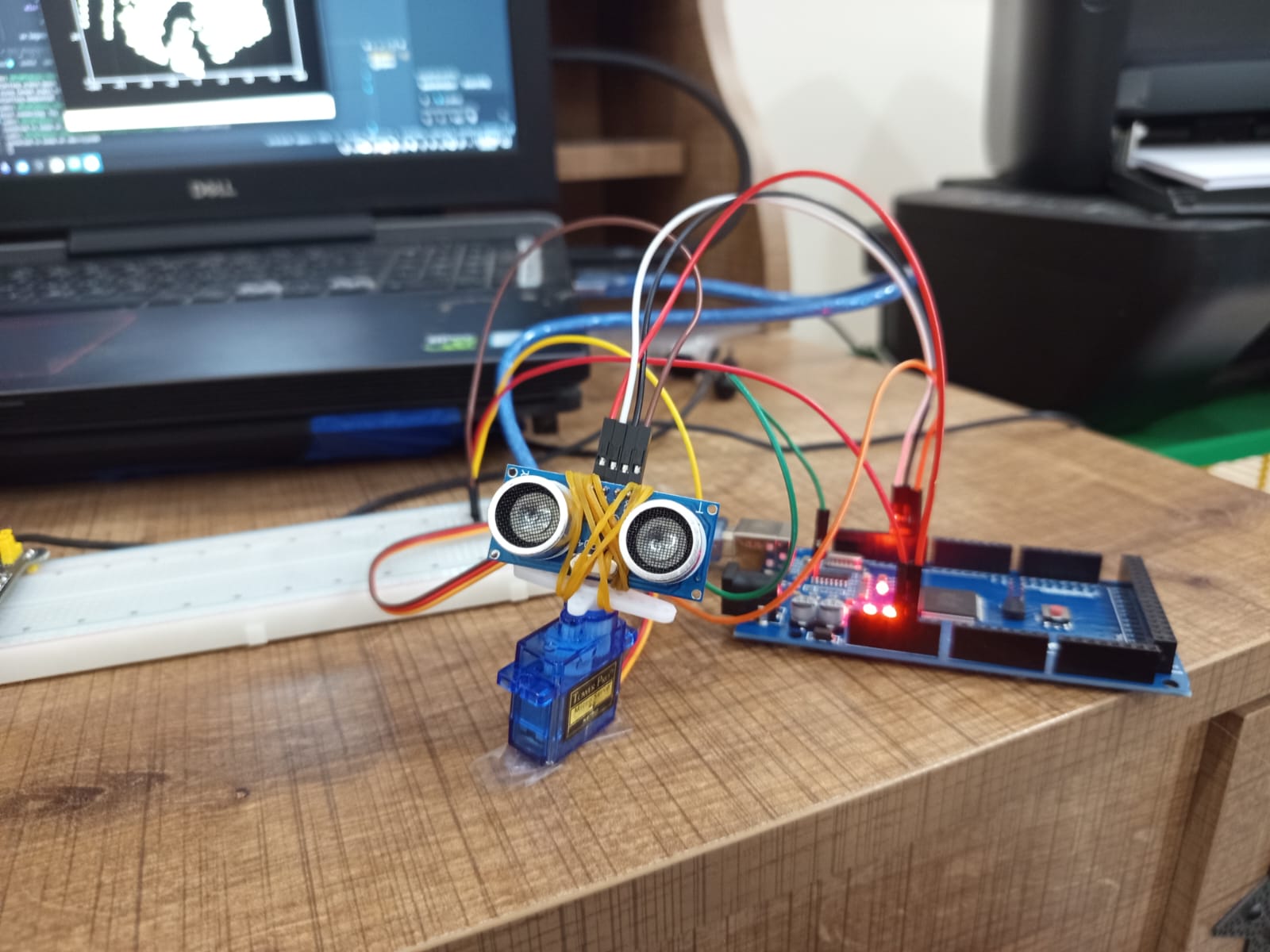

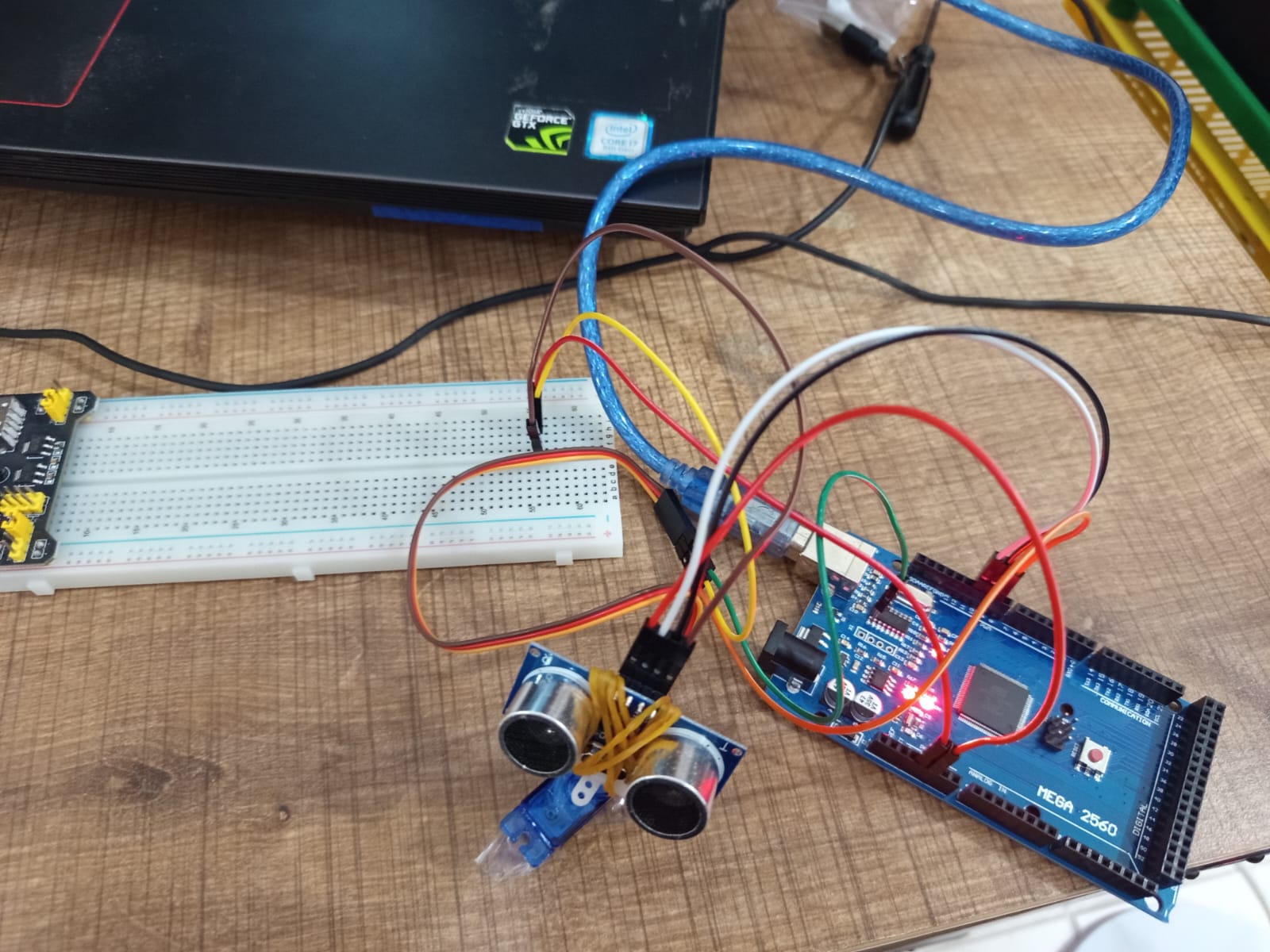

Technical Execution: The Math of Mapping The hardware component of this project consists of a rotating distance sensor streaming data to a computer via a USB serial connection. However, the Arduino only sends data in Polar Coordinates (the angle of the servo and the distance of the ping).

To plot this on a standard computer screen, I had to build a real-time data translation pipeline in Python. Using the math library, I wrote a function to convert the incoming Polar coordinates into Cartesian coordinates using standard trigonometry (x = distance * cos(angle) and y = distance * sin(angle)). This allowed matplotlib to plot the sweeping data points onto a live 2D grid.

Architecting the AI: Spatial Clustering (DBSCAN) Once the data was mapped to a grid, I needed an AI model to make sense of it. Standard clustering algorithms like K-Means require you to tell the AI exactly how many objects exist beforehand—which is impossible in an unknown environment.

Instead, I implemented DBSCAN (Density-Based Spatial Clustering of Applications with Noise) from the scikit-learn library. I configured the algorithm with two strict parameters: a maximum distance (eps=15cm) and a minimum density (min_samples=3).

- If the radar detects three or more points clustered tightly together, the AI classifies it as a solid, physical object and highlights it in a distinct color.

- If a data point is isolated or scattered, the AI mathematically determines it to be an erroneous "ghost echo," labels it as -1, and dims it out as gray background noise.

Technical Growth and Takeaways This project simulates the exact foundational logic used in modern autonomous vehicle LIDAR systems. It bridged a massive gap in my understanding of spatial computing, proving that mechatronics is not just about building a sensor that can "see," but writing the mathematical algorithms that allow the computer to actually "understand" what it is looking at.